So at the end of April 1995, I joined other early contributors to the Web in Australia in Ballina.

According to Helen Ashman and Adrian Vanzyl's report on the conference in the ACM SIGLINK Newsletter (Vol. IV No.3) there were 140 participants and the conference and at least that many more on the waiting list!

Again it's hard to convey nowadays just how small the Web community was at this time (early 1995), especially in Australia. So it was with huge excitement that I found out there was going to be a conference: The first Australian World Wide Web Conference (AusWeb95).

I submitted an extended abstract for a paper on the Hellenistic Greek Linguistics site as a case study of how to run a collaborative academic project on the Web. It talked about the mailing list archives and even how I achieved Greek display via GIFs of each character along with a Perl program that would translate BETAcode (which is what was used for representing Ancient Greek in ASCII at the time) into HTML IMG elements referencing the GIFs.

My paper was accepted so I just had to work out, as an undergraduate student how to fund it: flying cross country, accommodation, and the conference registration itself as there was no student discount.

The linguistics department said they had no money to send undergraduate students to conferences. Phil Dufty at UCS offered to fund my flight. The conference organisers arranged for me to stay at a local caravan park rather than the conference hotel.

I was continuing to dive more in to SGML at the same time as trying to keep up with developments happening on the Web (despite not being able to participate in the W3C).

I was also running courses on HTML at the university that were very spec-based. Looking back I was very pedantic about SGML and markup and the HTML specs and was quite a jerk about it.

But I was getting frustrated at the amount of bad information out there!

At the very start of 1995, I had launched the Hellenistic Greek Linguistics site. Here's the announcement to the LINGUIST LISTSERV (January 2nd, 1995):

ANNOUNCING: Hellenistic Greek Linguistics on the Internet [with apologies for any multiple postings]

I am pleased to announce new resources designed to bring together scholars interested in the study of Hellenistic (including New Testament) Greek Linguistics.

These resources include World Wide Web pages (accessible with such programs as Lynx, Mosaic and Netscape) as well as a mailing list. As well as general discussion, the list (which is archived on the Web pages) provides a forum for discussing the new reference grammar planned as a complete revision of Blass, Debrunner and Funk's standard work.

The Web pages include bibliographies and a (newly started) electronic archive of papers.

To browse the Web pages, go to the URL:

http://tartarus.uwa.edu.au/HGrk

To subscribe to the mailing list, send a request to:

jtauber@tartarus.uwa.edu.au

and to send a message to the entire list, write to:

greek-grammar@tartarus.uwa.edu.au

Please feel free to make enquires to jtauber@tartarus.uwa.edu.au

James K. Tauber (jtauber@tartarus.uwa.edu.au) 4th year Honours Student, Centre for Linguistics University of Western Australia, WA 6009, AUSTRALIA

We had a compulsory introductory courses we made all students who got Internet accounts do that covered the basics of netiquette, password security, email, network news, and the Web.

I remember during the Web part of the course introducing people to Lycos as the search engine, IMDb (then called the Cardiff Internet Movie Database and mirrored various places), and Yahoo (when it was running at akebono.stanford.edu/yahoo).

In 1995, I was in my final (honours) year and working at UCS doing both basic systems administration of the student systems and providing user support, including running training courses.

To be honest, I wasn't happy when I first heard about the W3C being formed.

Up until that point, discussion about specs like HTML was done out in the open and even though I was just a lurker, I could at least be on the mailing list.

The W3C formation felt like I was being shut out because, unless I worked for a "member" organization of the W3C, I could no longer be involved. I was jealous of the people that would get to work with Tim Berners-Lee, Dan Connolly and others on defining HTML and other technologies.

But I was still determined to be the local expert.

I immediately applied for the UCS job, and had to do a test which involved giving my responses to real user support questions. But after a few weeks I hadn't heard anything.

I wrote another email just checking in and Gaye Harvey, who ran all user support (for staff not just students) replied and said they'd decided to postpone the hiring until 1995.

Then about an hour later, she emailed me again and said that, seeing as I was obviously still interested, would I be willing to come on board immediately and they'd finish the rest of the hiring in 1995.

Weeks later Gaye told me that I was "the only non-CS student that applied for the job and it showed".

Two significant things happened in October 1994.

Firstly, University Computing Services advertised that they were looking to expand their part-time student user support team (I think it was still just Adam and he was probably due to graduate) and wanted applicants for multiple positions.

Secondly, the World Wide Web Consortium (W3C) was formed.

Around this time, I wrote my first journal article. A journal called Text Technology was doing a special edition on TEI and, after a call was put out on the TEI mailing list, I submitted an article about how I marked up Dante's Divine Comedy and the Greek New Testament using TEI. It was accepted and published in 1995.

I joined mailing lists discussing the HTML spec and dove into the HTML 2.0 Internet Draft. The Internet Draft talked about SGML, so I dove into SGML.

The only book in the University library on SGML was Martin Bryan's SGML: An Author's Guide to the Standard Generalized Markup Language so that became my handbook.

I became fascinated with the SGML philosophy of separating presentation from semantic markup.

And quite apart from the Web, I found out about SGML being used by things like the Text Encoding Initiative (TEI) which was immediately interesting to me because of application to corpus linguistics and biblical studies.

I was devouring whatever information I could about the Internet and how it worked: how IP routing worked, how TCP worked, how DNS worked. But the thing that really fascinated me was how the World Wide Web worked.

I decided there was just too much to the Internet to understand everything, so I decided to just focus on the technologies behind the Web.

It is important to point out that even at this stage, the World Wide Web was just one of a number of "information systems" on the Internet. Deciding to dig deep on Web technologies was in the same class as digging deep on USENET or FTP or Gopher or WAIS.

But talking to my friends, even the ones that were using the Internet a lot, they didn't know much about the Web. It was just obscure yet exciting enough, that I decided to focus on trying to become an expert on it.

I didn't have to run term for very long. At some point in 1994, University Computer Services decided to offer SLIP/PPP on the student dialup.

I can't be sure but I think I had a 14.4kbps modem at this stage.

There were no firewalls at this point, no DHCP and no NAT. Every computer on the network at UWA had its own static IP address. That meant that if you wanted SLIP/PPP access, you had to get assigned a static IP. A single subnet of 254 addresses was allocated for undergraduate students, and I got .1.

X Windows was a pain to configure and it barely ran on a 4MB machine, but I was able to get it, and a termified Mosaic, running on joshua.

I remember the spinning globe. And I even remember the first document I saw that included images: The MIT Guide to Lockpicking, complete with a glorious battleship grey background.

Now I mentioned above that the connection between joshua and tartarus wasn't IP-based, it was just that of a dumb terminal.

Michael O'Reilly had a solution for that. He'd written a program called term that you would run on both ends and it basically implemented IP over a dumb terminal, proxying any connections joshua wanted to make through tartarus.

The catch was client programs on your local side (i.e. on joshua) needed to be "termified", i.e. modified to talk to term rather than the kernel's TCP/IP stack. But it worked.

And at some point, something amazing happened: I downloaded a "termified" version of Mosaic.

Of course *nix hosts have to have a name so my 486SX with 4MB RAM running Linux 0.99pl13 was called "Joshua" after the computer in the movie WarGames.

I remember installing 0.99pl13which came out in September 1993 but I have a mailing list post from June 1993 where I mentioned a plan to port code from DOS to Linux so I'm not sure when I first installed Linux.

My guess is I tried it out around 1993Q2 but it was late 1993 before I started using it as my primary OS.

I briefly used the SLS distribution before switching to Slackware. On a 2400 baud modem it took a LONG time to download all the floppy disk images. And I had to tie up my family's landline every time I wanted to go online.

At first I didn't know what I was going to do with Linux. I remember seeing coloured ls for the first time and thinking "so the directories are blue...what now?".

Connected to the Internet it was essentially still just a dumb terminal.

Around this time, I bought my first (100% owned by me) computer. It was a 486SX with 4MB RAM and 110MB hard drive.

I suspect I bought the computer before trying Linux because I can't imagine how I would have tried Linux on my family's computer.

But then again, I don't remember running Windows on it either. It was definitely my first Linux machine.

At some point in the first half of 1993, I was introduced, via my friend John White, to Michael O'Reilly, a CS student at UWA who would go on to co-found iiNet (Australia's second largest ISP).

Michael told John and I that we should try Linux, a Unix-like operating system we could run on our PCs.

(Yes, yes, I understand Linux is just a kernel, not the operating system, but I'm pretty sure we called GNU/Linux just "Linux" then and I'm pretty sure we mispronounced it "Lie-nucks")

I remember Walnut Creek CDROM ran an anonymous ftp site that had (or mirrored) a huge amount of stuff at the time.

Often you didn't use archie, you'd just go digging around on ftp.cdrom.com looking for interesting looking things to download.

There were a couple of things you could telnet into, like library catalogs.

There was also archie for finding files on remote ftp servers. Here's how it worked: anonymous ftp sites would run a ls -lR regularly and put the result in their top-level directory. The archie system would go around and collect these ls -lR dumps. When you typed archie foo, it would find any anonymous ftp sites that had files containing "foo" in their name.

Remarkably this is very similar to how Napster worked seven years later.

Email and Usenet were the big things for me initially. I used elm for email and tin for news.

As best I can recall, Usenet groups were the way I initially found out about stuff, which at the time was either things you could download via anonymous ftp (back to tartarus, I still had to use XMODEM or whatever to get it to my PC) or topical mailing lists (or LISTSERVs) you could join.

Mailing lists then became a source for finding out about other mailing lists, or new files to download via anonymous ftp.

At first, my only point of access was a couple of dumb terminals on campus. Fortunately, my dad was able to borrow a 2.4kpbs modem for use on our family's PC (which I think was a 386 running Windows 3.1).

Now the dialup access wasn't IP-based so the computer at home was also acting just as a dumb terminal with XMODEM (and maybe Kermit) for file transfer. I can't remember what program I used but I'm pretty sure it was DOS-based, not Windows.

Now the Internet in Australia at the time was the Australian Academic and Research Network (AARNet).

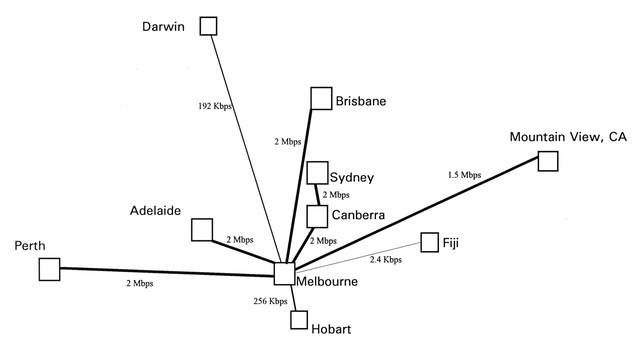

UWA was the hub in Perth. Here's the network diagram from around the time I was first on it:

via http://en.wikipedia.org/wiki/File:AARNet_Network_1993.png

That's right, the whole state had an uplink of 2mbps. The entire country had an uplink of 1.5mbps. And Fiji had just a 2.4kbps uplink.

I was an undergraduate student at the University of Western Australia (UWA) in late 1992 and had, a few months earlier, switched my major from physics to linguistics (which will turn out to be important to this story).

Phil Dufty, the manager of University Computing Services at UWA believed all students should have access to the Internet, a forward-thinking idea at a time when it was, for the most part, confined to grad students and faculty, at least at Australian universities.

So one day in November 1992, I walked into a little office manned by Adam Soudure (who worked part-time as the first student sys admin) who created an account for me on tartarus, the Ultrix machine set aside for undergraduates.

I'm just heading back to Boston after a trip to Santa Clara that included attending the W3C's 20-year anniversary celebration. It got me thinking about my own history with the Web and that's what this stream will, over time, record my reminisces of.

It starts, though, with getting on the Internet.